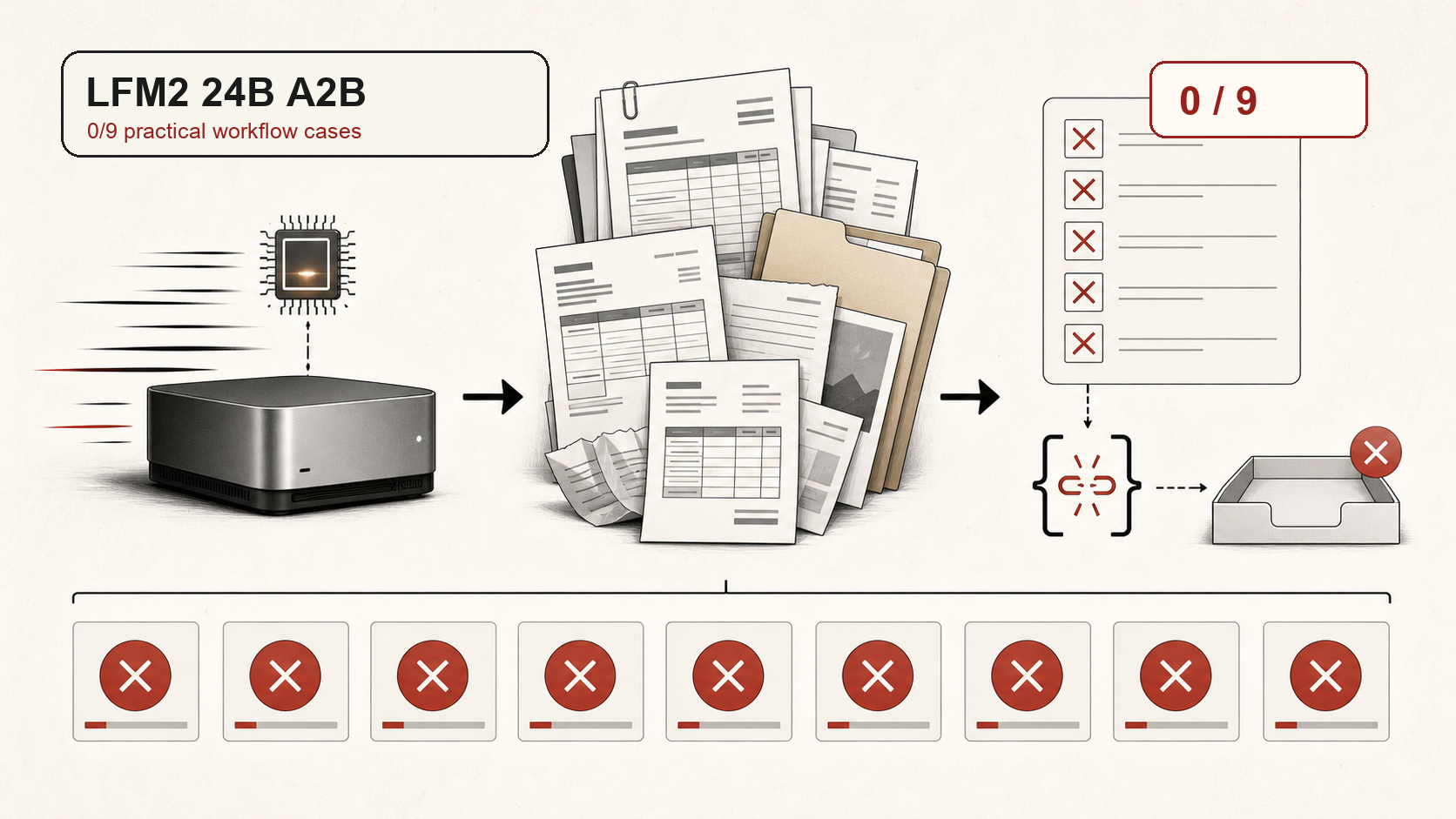

LFM2 24B A2B: fast smoke test, zero workflow wins

LFM2 24B A2B passed a trivial JSON smoke test, then failed every current Local Model Bench paperwork case. The issue was not speed. It was task completion: wrong audit logic in scan cases, and no valid artifacts in agentic workflow cases.

LFM2 24B A2B is an interesting local candidate on paper: a 24B total-parameter mixture-of-experts model with roughly 2B active parameters, pitched for low-latency local use.

That makes it a good fit for the question Local Model Bench actually asks: not whether a model can answer a toy prompt, but whether it can survive private paperwork, messy folders, and exact final artifacts. On that version of the test, LFM2 did not survive.

Model Context

- Model family

- Liquid AI LFM2

- Run type

- Local LM Studio run

- Local hardware

- Mac mini M4, 64 GB unified memory

- Architecture listing

- 24B total / 2B active MoE

- Benchmark role

- Fast local worker candidate

Positioned As

- Liquid's LFM2-24B-A2B release describes the model as a sparse MoE with 24B total parameters and 2B active parameters, designed for low latency and efficient local deployment.

- The LM Studio listing positions it as a local model with 32K context and support for tool use, embeddings, structured output, and reasoning.

- Those claims make the practical test fair: if the model is a local worker candidate, it should at least attempt structured paperwork and workflow closure.

What We Actually Tested

- The model first passed a trivial LM Studio smoke test: return a tiny JSON object with a fixed sum.

- It then ran all five generated-invoice Paperwork Trial cases. It produced JSON, but the audit logic failed across the board: invoice classification, ignored document IDs, evidence, warnings, totals, and proof codes.

- It also ran all four Paperwork Workflow cases. These require agentic-style file selection, normalized artifacts, protected input folders, and final output files.

- The workflow cases failed harder: no valid `audit_result.json`, missing required artifacts, and tool-call traces that did not match the runner protocol.

What Worked

- Fast local responses in LM Studio.

- Passed a trivial JSON smoke test.

- Produced syntactically parseable JSON in the invoice-image cases.

Where It Broke

- Zero resolved cases across the current practical suite.

- Zero core passes across both scanned-paperwork and workflow cases.

- Approved invoices that should have gone to review.

- Used filenames instead of visible document IDs.

- Failed the agentic file-artifact workflow completely.

Readout

LFM2 24B A2B looked fast, but speed did not translate into useful paperwork completion. For this benchmark, it is a clean fail: not a near miss, not a formatting-only problem, and not a case of one bad task. It went 0/9.