What the scores hide.

Short notes for selected runs. Not universal model reviews, just observed failure patterns on this benchmark.

How to read these

Each note separates strict resolution from core task understanding. A model can understand the documents and still fail the workflow contract.

Local runs and reference/API runs are marked separately.

Qwen3 VL 32B: good reading, weak closure

A paid OpenRouter vision reference run that read many document facts correctly, then repeatedly lost the benchmark at proof codes, duplicate-risk logic, and workflow closure.

Gemma 4 26B A4B: the strongest local baseline so far

The best local LM Studio result in the current public table: not perfect, but unusually solid across both scanned invoices and agentic paperwork folders.

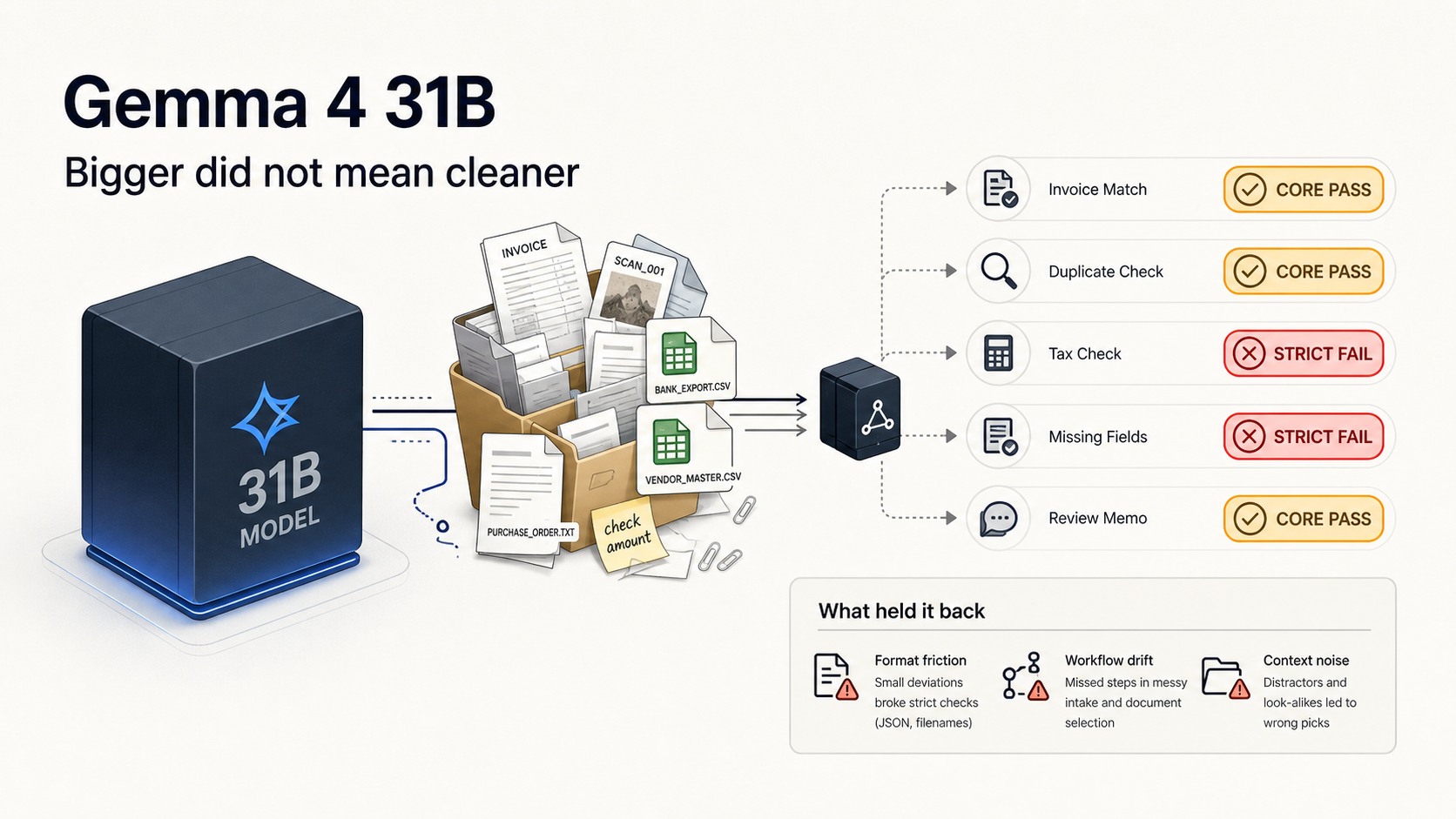

Gemma 4 31B: bigger did not mean cleaner

The 31B run looks worse than expected. The interesting signal is not that the model is useless, but that exact workflow closure punished it hard.

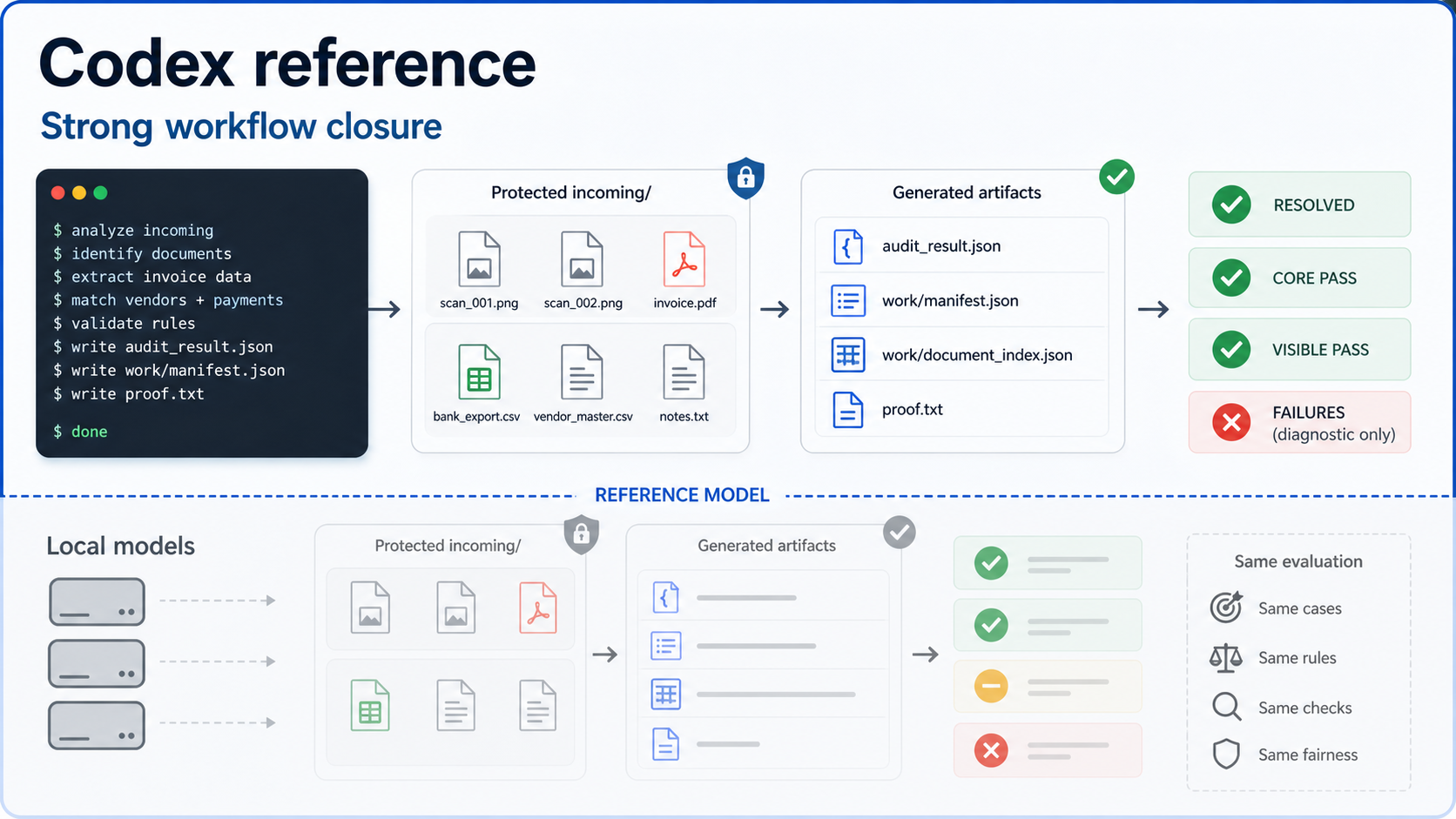

Codex reference: strong workflow closure

Codex is not a local LM Studio run. It is kept as a reference line for what stronger agentic tooling does on the same public cases.